Metadata-as-Code

Make business sense of technical structures in applications and databases,

with automated end-to-end metadata management

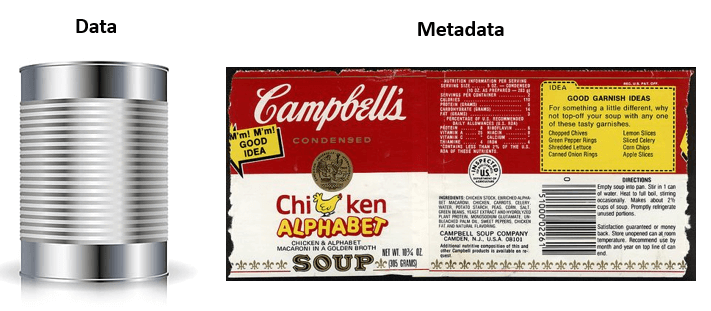

One of the primary challenges severely constraining organizations is to establish a shared understanding of context and meaning of data. Does everyone interpret a column name in a report the same way? And does the column name represent exactly the nuances of what has been measured? There are plenty of business-facing data dictionaries, glossaries, and metadata management solutions trying to address this issue. But while business users are, maybe, on the same page, does the tech side of the organization share the same understanding? How about when applications evolve quickly and new columns are added at a rapid pace? Is the metadata data used by the business keeping up with the changes in data structures?

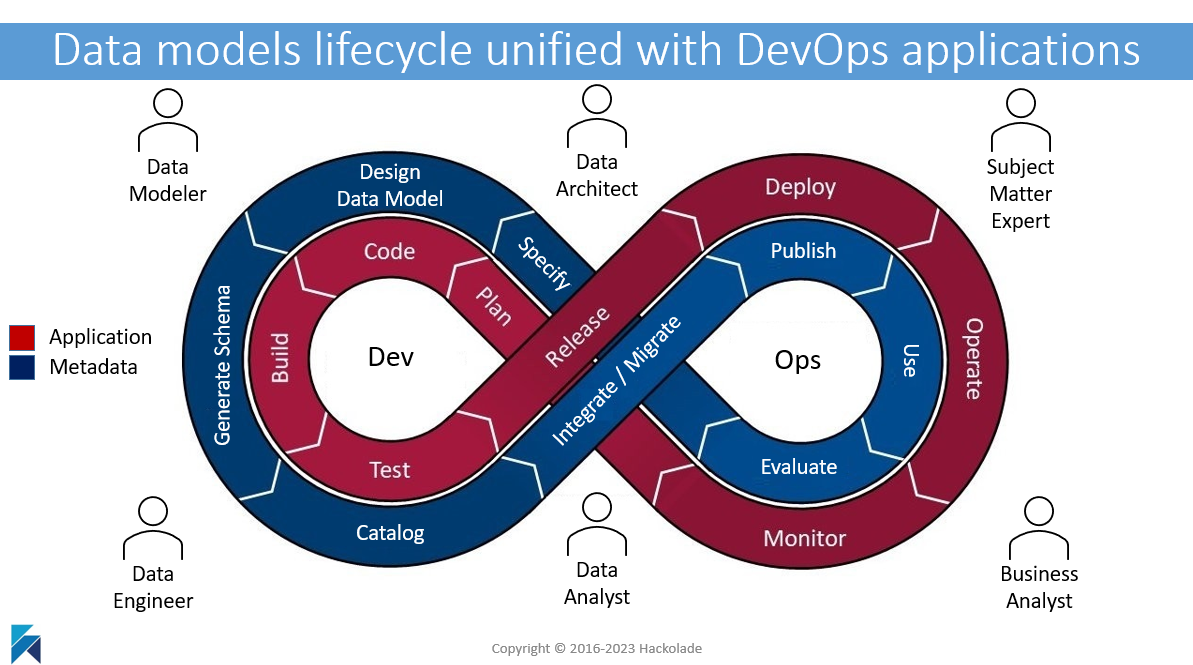

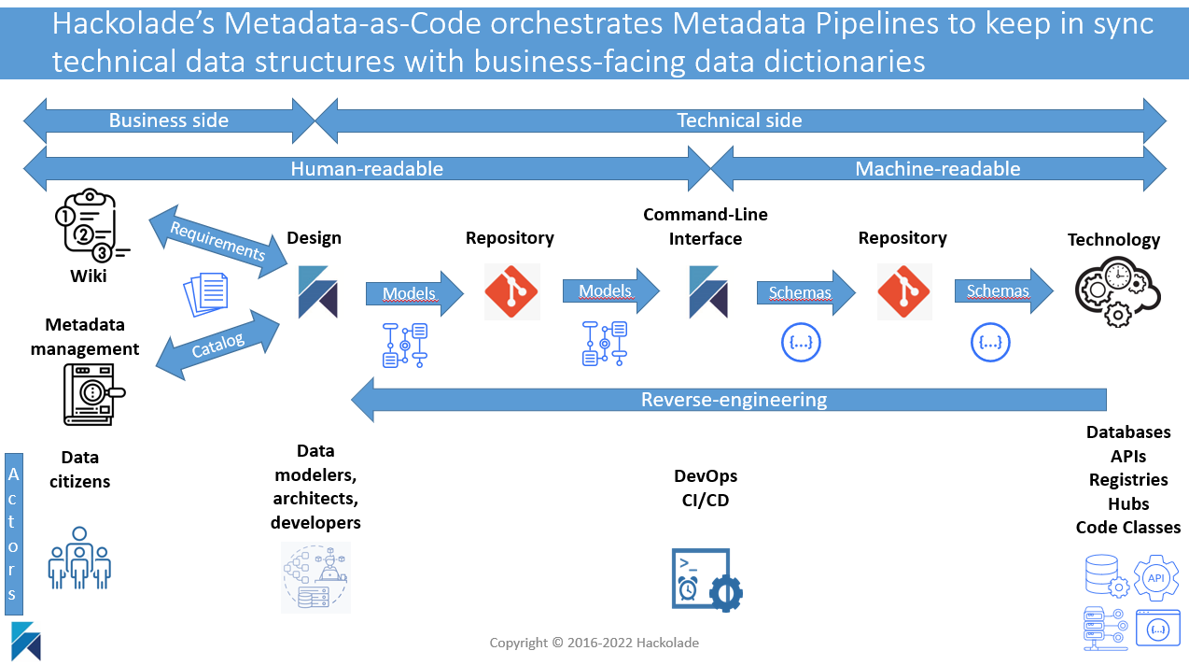

With Hackolade’s Metadata-as-Code strategy, data models are co-located with application code thanks to a tight integration with Git repositories and DevOps CI/CD workflows. As a result, data models and their schema artefacts closely follow the lifecycle of application development and deployment to operations. At the same time, technical structures are published in data catalogs to ensure a shared understanding of meaning and context with business users.

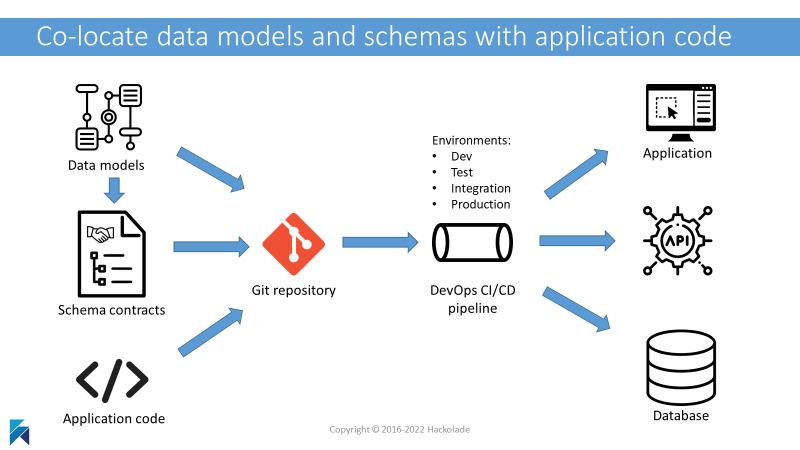

The solution is a single source-of-truth, shared across business and tech, and maintained up-to-date thanks to automated synchronization when structures evolve on the technology side. This single source-of-truth is a Git repository (or set of repositories) where Hackolade Studio Workgroup Edition manages the evolution of data models and schema designs (and ALTER scripts where applicable) co-located with application code. When a branch is deployed in production, the corresponding changes are published to business-facing data dictionaries.

tl;dr Accelerate your Digital Transformation and Cloud Migration

- For large and complex organizations sold on the benefits of data management and governance, modern architecture patterns (micro-services, event-driven architectures, self-service analytics, …) have created critical execution challenges jeopardizing the achievement of the strategic objectives in application modernization programs.

- The explosion of schemas in databases and data exchanges, combined with their rapid and constant evolution, requires next-generation tooling for the design and management of schemas in order to avoid chaos.

- Hackolade provides data architects with a single source of truth for data structures through their lifecycle, to harness the power of a mounting number of technologies in enterprise data landscapes.

- Hackolade’s Metadata-as-Code orchestrates metadata pipelines to keep in sync technical data structures with business-facing data dictionaries.

We are often asked by prospects what sets Hackolade apart from traditional data modeling tools. There are many obvious differences:

- our unique ability to represent nested objects in Entity-Relationship diagrams and visualize physical schemas for "schemaless" databases;

- the simplicity and power of a minimalist and intuitive user interface;

- our vision to support a large variety of modern storage and communication technology, often cloud-based;

- our care and speed to respond to customer requests and suggestions (we release 50+ times per year);

- our product-led-growth strategy with no pushy salespeople;

- etc...

But we tend to think that the true revolution enabled by Hackolade is our Metadata-as-Code approach.

With Metadata-as-Code, we promote several use cases:

- co-location of data models with schema designs and application code, following a unified lifecycle to be deployed together thru CI/CD pipelines;

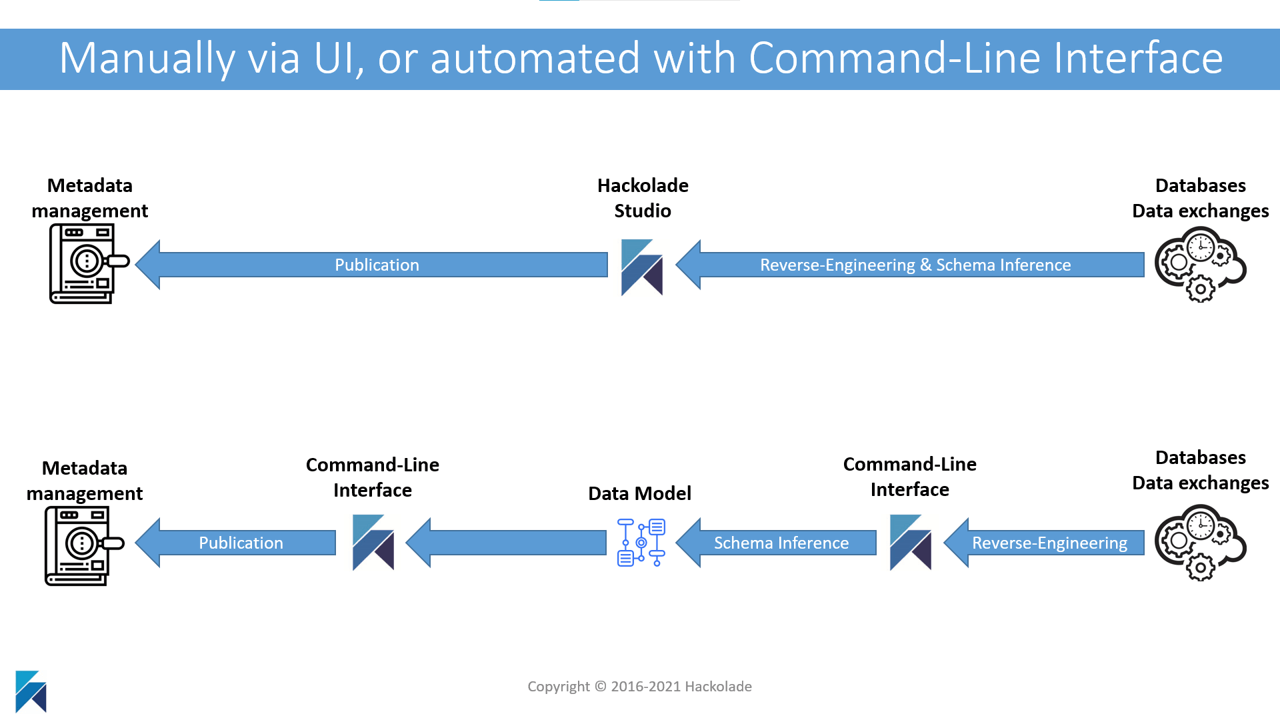

- automation of data modeling operations via our Command-Line Interface: forward- and reverse-engineering, format conversions, model comparisons, ALTER scripts generation, documentation generation, etc.

- publish to the business community: metadata management suites, data catalogs, and data dictionaries.

The ambition is to orchestrate the synchronization of technical schemas with business-facing data catalogs and dictionaries. Digital transformation initiatives in agile mode impose a constant evolution of schemas to support new features claimed by users. The only way to sustain quality and coordination at scale in such an environment is to co-locate data models and schemas with application code, then publish these structures so the business users can specify context and meaning and leverage the information in self-service analytics.

Co-location of models, schemas, and code, for DevOps deployment

Data models provide an abstraction describing and documenting the information system of an enterprise. Data models provide value in understanding, communication, collaboration, and governance. They are great to iterate designs without writing a line of code. They are easy to read for humans and can serve as a reference to feed data dictionaries used by the various data citizens on the business side.

Schemas provide “consumable” collections of objects describing the layout or structure of a file, a transaction, or a database. A schema is a scope contract between producers and consumers of data, and an authoritative source of structure.

With Hackolade Studio, you can easily create and maintain data models with their Entity-Relationship Diagrams that are easy to understand by the business community.

The main technical outputs out of the tool are schemas contracts used during data exchanges.

Data models, along with schema designs and subsequent evolutions, must closely follow the lifecycle of application code changes. Specifically, evolutions of application are managed through Git repository branches, so they can be tested and applied as a single logical atomic unit.

Our data models are persisted in open JSON files that are fully tracked “as code” by Git, allowing us to not re-invent the wheel on this highly complex feature set. Hackolade Studio provides a user-friendly UI for data modelers not familiar with the Git command line, plus tight integration with the most popular and pervasive source code control tool in organizations.

Command-Line Interface to automate conversions and operations

Our CLI lets customers automatically generate schemas and scripts, or reverse-engineer an instance to infer the schema, or to reconcile environments and detect drifts in indexes or schemas. The CLI also lets customers automatically publish documentation to a portal or data dictionary when data models evolve.

Today, it has become obvious to many of our customers that success in the world of self-service analytics, data meshes, as well as micro-services, and event-driven architectures can be challenged by the need to maintain end-to-end synchronization of data catalogs/dictionaries with the constant evolution of schemas for databases and data exchanges.

Publish to the business community of data citizens

In other words, the business side of human-readable metadata management must be up-to-date and in sync with the technical side of machine-readable schemas. And that process can only work at scale if it is automated.

It is hard enough for IT departments to keep in-sync schemas across the various technologies involved in data pipelines. For data to be useful, business users must have an up-to-date view of the structures.

Metadata provides meaning and context to business users so they can derive precise knowledge and intelligence from data structures that may lack nuance or be ambiguous to interpret without thorough descriptions. This is critical for proper reporting and decision-making.

Hackolade Studio is a unique data modeling tool with the technical ability to facilitate strategic business objectives. Customers leverage the Command-Line Interface, invoking functions from a DevOps CI/CD pipeline, from a command prompt, often with Docker containers. Combined with Git as the repository for data models and schemas, users get change tracking, peer reviews, versioning with branches and tags, easy rollbacks, as well as offline mode.

Putting it all together is easy with the integration of all the pieces of the puzzle. Design and maintain data models in Hackolade Studio, then publish to data dictionaries so business users always have an up-to-date view of data structures being deployed across the technology landscape with synchronized schemas. Voilà!

Note that some of our customers have pushed this even further. Leveraging custom properties, they implement model-driven code generation of data classes and REST APIs used in different application components to provide the foundation for architectural lineage.

With a single source-of-truth synchronized across business and tech, your organization is now able to make business sense of technical structures in applications and databases.

You will find more details in our online documentation.

Contact us at support@hackolade.com if you have any questions.